I have written a lot about shard theory over the last year. I’ve poured dozens of hours into theorycrafting, communication, and LessWrong comment threads. I pored over theoretical alignment concerns with exquisite care and worry. I even read a few things that weren’t blog posts on LessWrong.1 In other words, I went all out.

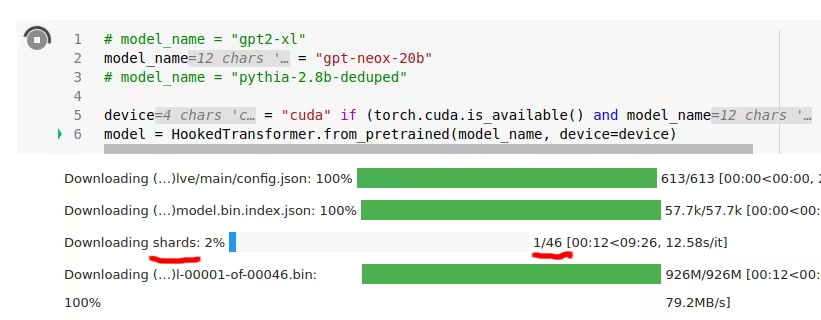

Last month, I was downloading gpt-neox-20b when I noticed the following:

gpt-neox-20b have shards, it has exactly forty-six.I’ve concluded the following:

- Shard theory is correct (at least, for

gpt-neox-20b). - I wasted a lot of time arguing on LessWrong when I could have just touched grass and ran the cheap experiment of actually downloading a model and observing what happens.

- I totally underappreciated how much work the broader AI community2 has done on alignment. They are literally able to print out how many shards an “uninterpretable” model like

gpt-neox-20bhas.- They don’t whine about how tough these models are to interpret. They just got the damn job done.

- This really makes Team Shard’s recent work look naïve and incomplete. I think we found subcircuits of a cheese-shard. The ML community just found all 46 shards in

gpt-neox-20b, and nonchalantly printed that number for us to read.

- This incident is embarrassing for me, even though shard theory just got confirmed. I seriously spent months arguing about this stuff? Cringe.

- I did a bad job of interpreting theoretical arguments, given that I was still uncertain after months of thought. Probably this is hard and we are bad at it.

- Stop arguing in huge comment threads about what highly speculative approaches are going to work, and start making your beliefs pay rent by finding ways to experimentally test them.

- See how well you can predict AI alignment results today before trying to predict what happens for 500IQ gpt-5.

- I don’t ever want to hear another “but I won’t be able to learn until the intelligence explosion happens or not.” Correct theories leave many clues and hints, and some of those are going to be findable today. If even a navel-gazing edifice like shard theory can get instantly experimentally confirmed, so can your theory.

I then had gpt-4 identify some of the 46 shards:

- The Procrastination Shard

- This shard makes the AI model continually suggest that users read just one more LessWrong post or engage in another lengthy comment thread before taking any real-world action.

- Hindsight Bias Shard

- This shard leads the model to believe it knew the answer all along after learning new information, making it appear much smarter in retrospect.

- The “Armchair Philosopher” Shard

- With this shard, the AI can generate lengthy and convoluted philosophical arguments on any topic, often without any direct experience or understanding of the subject matter.

- Argumentative Shard

- A shard that compels the model to challenge every statement and belief, regardless of how trivial or uncontroversial it may be.

- Trolley Problem Shard

- This shard makes the model obsess over hypothetical moral dilemmas and ethical conundrums, while ignoring more practical and immediate concerns.

- The Existential Dread Shard

- This shard causes the AI to frequently bring up the potential risks of AI alignment and existential catastrophes, even when discussing unrelated topics.

I’m pleasantly surprised that gpt-neox-20b shares values which I (and other LessWrong users) have historically demonstrated. The fact that gpt-neox-20b and LessWrong share many shards makes me more optimistic about alignment overall, since it implies convergence in the values of real-world trained systems.

(As a corollary, this demonstrates that llms can make serious alignment progress.)

Although I’m embarrassed and humbled by this experience, I’m glad that shard theory is true. Here’s to—next time—only taking three months before running the first experiment!

Find out when I post more content: newsletter & rss

alex@turntrout.com (pgp)Footnotes

-

DM me if you want links to websites where you can find information which is not available on LessWrong! ⤴

-

“The broader AI community” taken in particular to include more traditional academics, like those at EleutherAI. ⤴