Table of Contents

This post treats reward functions as “specifying goals”, in some sense. As I explained in Reward Is Not The Optimization Target, this is a misconception that can seriously damage your ability to understand how AI works. Rather than “incentivizing” behavior, reward signals are (in many cases) akin to a per-datapoint learning rate. Reward chisels circuits into the AI. That’s it!

Summary of the current power-seeking theoremsGive me a utility function, any utility function, and for most ways I could jumble it up—most ways I could permute which outcomes get which utility, for most of these permutations, the agent will seek power.

This kind of argument assumes that the set {utility functions we might specify} is closed under permutation. This assumption is unrealistic. Practically speaking, we reward agents based off of observed features of the agent’s environment.

For example, Pac-Man eats dots and gains points. A football AI scores a touchdown and gains points. A robot hand solves a Rubik’s cube and gains points. But most permutations of these objectives are implausible because they’re high-entropy, they’re complex, they assign high reward to one state and low reward to another state without a simple generating rule that grounds out in observed features. Practical objective specification doesn’t allow that many degrees of freedom in what states get what reward.

I explore how instrumental convergence works in this case. I also walk through how these new results retrodict the fact that instrumental convergence basically disappears for agents with utility functions over action-observation histories.

Case Studies

Gridworld

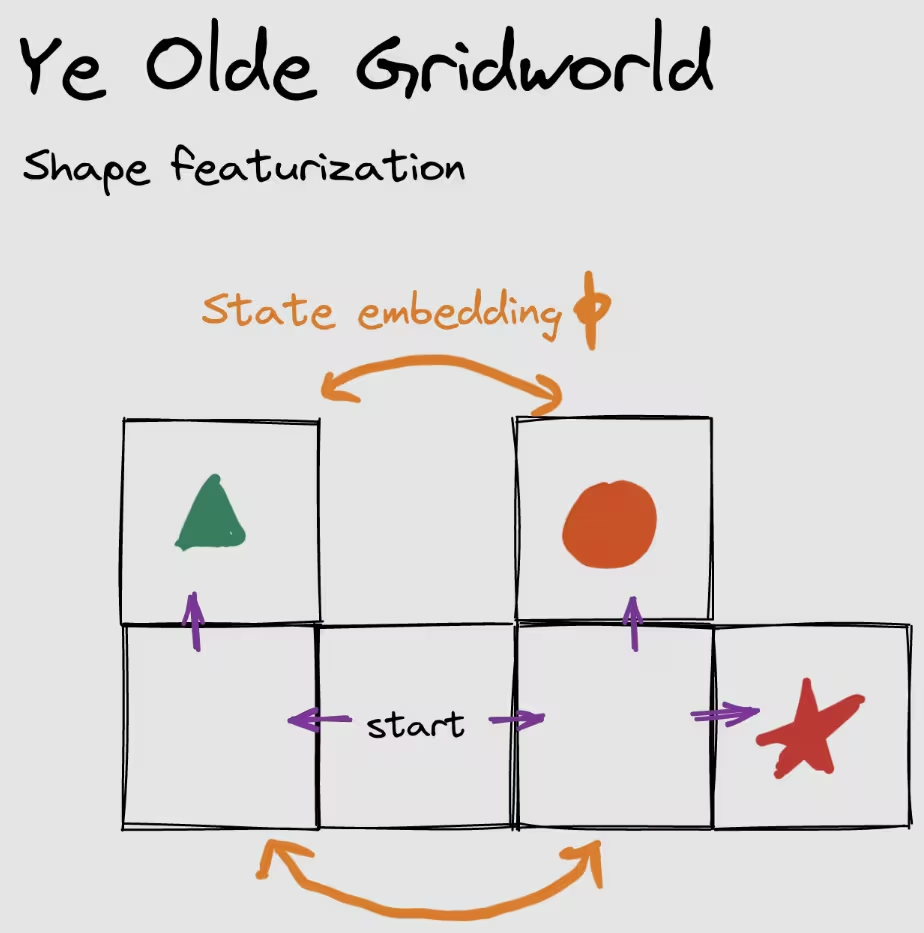

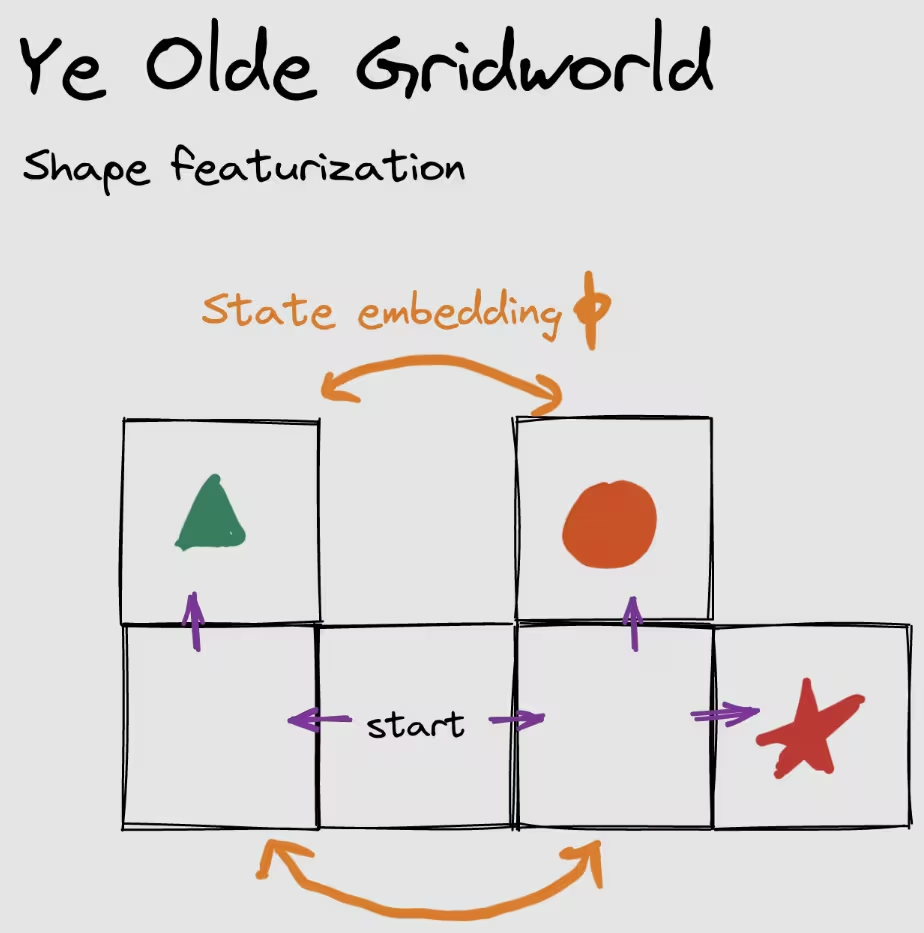

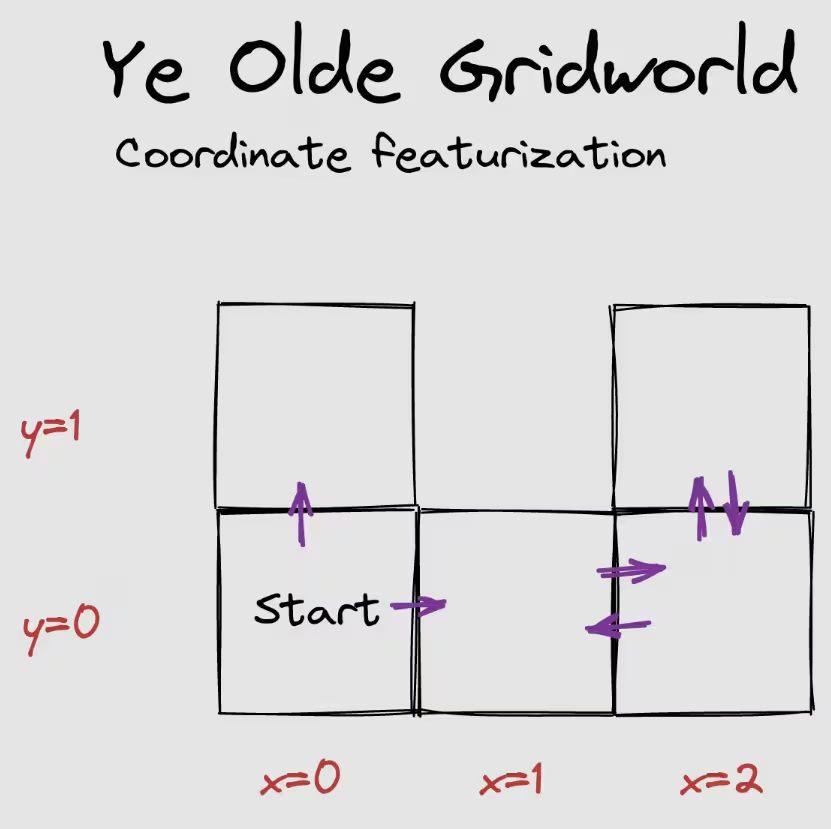

Consider the following environment, where the agent can either stay put or move along a purple arrow.

Suppose the agent gets some amount of reward each timestep, and it’s choosing a policy to maximize its average per-timestep reward. Previous results tell us that for generic reward functions over states, at least half of them incentivize going right. Two terminal states are on the left and three are on the right. Since 3 > 2, we conclude that at least of objectives incentivize going right.

It’s damn hard to have so many degrees of freedom that you’re specifying a potentially independent utility number for each state.1 Meaningful utility functions will be featurized in some sense—only depending on certain features of the world state, and of how the outcomes transpired, etc. If the featurization is linear, then it’s particularly easy to reason about power-seeking incentives.

Shape featurization

Let be the feature vector for state , where the first entry is 1 iff the agent is standing on . The second and third entries represent and , respectively. That is, the featurization only records what shape the agent is standing on. Suppose the agent makes decisions in a way which depends only on the featurized reward of a state: , where expresses the feature coefficients. Then the relevant terminal states are only {triangle, circle, star}, and we conclude that 2/3 of coefficient vectors incentivize going right. We state the argument precisely using orbits. For every coefficient vector , at least2 2/3 of its permuted variants make the agent prefer to go right.

This particular featurization increases the strength of the orbit-level incentives—whereas before, we could only guarantee 1/2-strength power-seeking tendency, now we guarantee 2/3-level.34

There’s another point I want to make in this tiny environment.

Suppose we find an environmental symmetry which lets us apply the original power-seeking theorems to raw reward functions over the world state. Letting be a column vector with an entry of 1 at state and 0 elsewhere, in this environment, we have the symmetry enforced by:

Given a state featurization, and given that we know that there’s a state-level environmental symmetry , when can we conclude that there’s also feature-level power-seeking in the environment?

Here, we’re asking “if reward is only allowed to depend on how often the agent visits each shape, and we know that there’s a raw state-level symmetry, when do we know that there’s a shape-feature embedding from (left shape feature vectors) into (right shape feature vectors)?”

In terms of “what choice lets me access ‘more’ features?”, this environment is relatively easy—look, there are twice as many shapes on the right. More formally, we have:

where the left set can be permuted two separate ways into the right set (since the zero vector isn’t affected by feature permutations).

I’m gonna play dumb and walk through to illustrate a more important point about how power-seeking tendencies are guaranteed when featurizations respect the structure of the environment.

Consider the state . We permute it to be using (because ), and then featurize it to get a feature vector with 1 and 0 elsewhere.

Alternatively, suppose we first featurize to get a feature vector with 1 and 0 elsewhere. Then we swap which features are which, by switching and . Then we get a feature vector with 1 and 0 elsewhere—the same result as above.

The shape featurization plays nice with the actual nitty-gritty environment-level symmetry. More precisely, a sufficient condition for feature-level symmetries: (Featurizing and then swapping which features are which) commutes with (swapping which states are which and then featurizing).5 And where there are feature-level symmetries, just apply the normal power-seeking theorems to conclude that there are decision-making tendencies to choose sets of larger features.

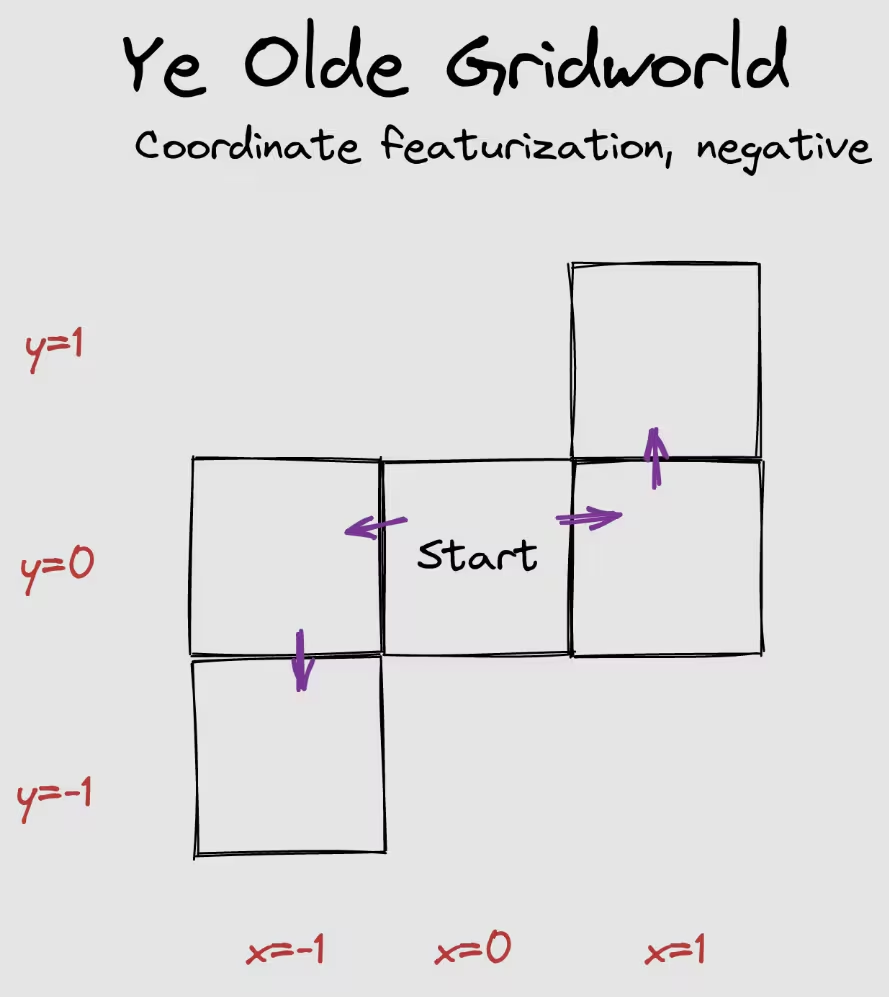

featurization

In a different featurization, suppose the featurization is the agent’s coordinates. .

Given the start state, if the agent goes up, its reachable feature vector is just , whereas the agent can induce if it goes right. Therefore, whenever up is strictly optimal for a featurized reward function, we can permute that reward function’s feature weights by swapping the - and -coefficients ( and , respectively). Again, this new reward function is featurized, and it makes going right strictly optimal. So the usual arguments ensure that at least half of these featurized reward functions make it optimal to go right.

Sometimes, these similarities won’t hold, even when it initially looks like they “should”!

| Action | Feature vectors available |

|---|---|

| Left | |

| Right |

Switching feature labels cannot copy the left feature set into the right feature set! There’s no way to just apply a feature permutation to the left set, and thereby produce a subset of the right feature set. Therefore, the theorems don’t apply, and so they don’t guarantee anything about how most permutations of every reward function incentivize some kind of behavior.

On reflection, this makes sense. If , then there’s no way the agent will want to go right. Instead, it’ll go for the negative feature values offered by going left. This will hold for all permutations of this feature labelling, too. So the orbit-level incentives can’t hold.

If the agent can be made to “hate everything” (all feature weights are negative), then it will pursue opportunities which give it negative-valued feature vectors, or at least strive for the oblivion of the zero feature vector. Vice versa for if it positively values all features.

StarCraft II

Consider a deep RL training process where the agent’s episodic reward is featurized into a weighted sum of the different resources the agent has at the end of the game, with weight vector . For simplicity, we fix an opponent policy and a learning regime (number of epochs, learning rate, hyperparameters, network architecture, and so on). We consider the effects of varying the reward feature coefficients .

- Outcomes of interest

- Game state trajectories.

- AI decision-making function

- returns the probability that, given our fixed learning regime and reward feature vector , the training process produces a policy network whose rollouts instantiate some trajectory .

- What the theorems say

- If is the zero vector, the agent gets the same reward for all trajectories, and so gradient descent does nothing, and the randomly initialized policy network quickly loses against any reasonable opponent. No power-seeking tendencies if this is the only plausible parameter setting.

- If only has negative entries, then the policy network quickly learns to throw away all of its resources and not collect any more. If and only if this has been achieved, the training process is indifferent to whether the game is lost. No real power-seeking tendencies if it’s only plausible that we specify a negative vector.

- If has a positive entry, then policies learn to gather as much of that resource as possible. In particular, there aren’t orbit elements with positive entries but where the learned policy tends to just die, and so we don’t even have to check that the permuted variants of such feature vectors are also plausible. Power-seeking occurs.

- This reasoning depends on which kinds of feature weights are plausible, and so wouldn’t have been covered by the previous results.

Minecraft

Similar setup to StarCraft II, but now the agent’s episode reward is (Amount of iron ore in chests within 100 blocks of spawn after 2 in-game days)(Same but for coal), where are scalars (together, they form the coefficient vector ).

- Outcomes of interest

- Game state trajectories.

- AI decision-making function

- returns the probability that, given our fixed learning regime and feature coefficients , the training process produces a policy network whose rollouts instantiate some trajectory .

- What the theorems say

- If is the zero vector, the analysis is the same as before. No power-seeking tendencies. In fact, the agent tends to not gain power because it has no optimization pressure steering it towards the few action sequences which gain the agent power.

- If only has negative entries, the agent definitely doesn’t hoard resources in chests. Otherwise, there’s no real reward signal and gradient descent doesn’t do a whole lot due to sparsity.

- If has a positive entry, and if the learning process is good enough, agents tend to stay alive. If the learning process is good enough, there just won’t be a single feature vector with a positive entry which tends to produce non-self-empowering policies.

The analysis so far is nice to make a bit more formally, but it isn’t really pointing out anything that we couldn’t have figured out pre-theoretically. I think I can sketch out more novel reasoning, but I’ll leave that to a future post.

Beyond The Featurized Case

Consider some arbitrary set of “plausible” utility functions over outcomes. If we have the usual big set of outcome lotteries (which possibilities are, in the view of this theory, often attained via “power-seeking”), and contains copies of some smaller set via environmental symmetries , then when are there orbit-level incentives within —when will most reasonable variants of utility functions make the agent more likely to select rather than ?

When the environmental symmetries can be applied to the -preferring-variants, in a way which produces another plausible objective. Slightly more formally, if, for every plausible utility function where the agent has a greater chance of selecting than of selecting , we have the membership for all .

This covers the totally general case of arbitrary sets of utility function classes we might use. (And, technically, “utility function” is decorative at this point—it just stands in for a parameter which we use to retarget the AI policy-production process.)

The general result highlights how affects what conclusions we can draw about orbit-level incentives. All else equal, being able to specify more plausible objective functions for which means that we’re more likely to ensure closure under certain permutations. Similarly, adding plausible -dispreferring objectives makes it harder to satisfy , which makes it harder to ensure closure under certain permutations, which makes it harder to prove instrumental convergence.

Revisiting How The Environment Structure Affects Power-Seeking Incentive Strength

Structural assumptions on utility really do matter when it comes to instrumental convergence:

Setting Strength of instrumental convergence uaoh Nonexistent uOH Strong State-based objectives

(e.g. state-based reward in mdps)Moderate Environmental structure can cause instrumental convergence, but (the absence of) structural assumptions on utility can make instrumental convergence go away (for optimal agents).

In particular, for the mdp case, I wrote:

Mdps assume that utility functions have a lot of structure: the utility of a history is time-discounted additive over observations. Basically, , for some and reward function over observations. And because of this structure, the agent’s average per-timestep reward is controlled by the last observation it sees. There are exponentially fewer last observations than there are observation histories. Therefore, in this situation, instrumental convergence is exponentially weaker for reward functions than for arbitrary uOH.

This involves a featurization which takes in an action-observation history, ignores the actions, and spits out time-discounted observation counts. The utility function is then over observations (which are just states in the mdp case). Here, the symmetries can only be over states, and not histories, and no matter how expressive the plausible state-based-reward-set is, it can’t compete with the exponentially larger domain of the observation-history-based-utility-set , and so the featurization has limited how strong instrumental convergence can get by projecting the high-dimensional uOH into the lower-dimensional uState.

However, when we go from uaoh to uOH, we’re throwing away even more information—information about the actions! This mapping is also a sparse projection. So what’s up?

When we throw away info about actions, we’re breaking some symmetries which made instrumental convergence disappear in the uaoh case. In any deterministic environment, there are equally many uaoh which make me want to go e.g. down (and, say, die) as which make me want to go up (and survive). This equivalence holds because of symmetries which swap the value of an optimal aoh with the value of an aoh going the other way:

When we restrict the utility function to not care about actions, now you can only modify how it cares about observation histories. Here, the aoh environmental symmetry which previously ensured balanced statistical incentives, no longer enjoys closure under The restricted plausible set theorem no longer works. Thus, instrumental convergence appears when restricting from uaoh to uOH.

ThanksI thank Justis Mills for feedback on a draft.

Find out when I post more content: newsletter & rss

alex@turntrout.com (pgp)Appendix: tracking key limitations of the power-seeking theorems

From last time:

Quote

- The results aren’t first-person: They don’t deal with the agent’s uncertainty about what environment it’s in.

- Not all environments have the right symmetries

- But most ones we think about seem to

Don’t account for the ways in which we might practically express reward functions.(This limitation was handled by this post.)

I think it’s reasonably clear how to apply the results to realistic objective functions. I also think our objective specification procedures are quite expressive, and so the closure condition will hold and the results go through in the appropriate situations.

Footnotes

-

It’s not hard to have this many degrees of freedom in such a small toy environment, but the toy environment is pedagogical. It’s practically impossible to have full degrees of freedom in an environment with a trillion states. ⤴

-

“At least,” and not “exactly.” If is a constant feature vector, it’s optimal to go right for every permutation of (trivially so, since ’s orbit has a single element—itself). ⤴

-

Even under my more aggressive conjecture about “fractional terminal state copy containment,” the unfeaturized situation would only guarantee 3/5-strength orbit incentives, strictly weaker than 2/3-strength. ⤴

-

Certain trivial featurizations can decrease the strength of power-seeking tendencies, too. For example, suppose the featurization is 2-dimensional:

This featurization will tend to produce 1:1 survive / die orbit-level incentives. The incentives for raw reward functions may be 1,000:1 or stronger. ⤴

-

There’s something abstraction-adjacent about this result (proposition D.1 in the linked Overleaf paper). The result says something like “do the grooves of the agent’s world model featurization, respect the grooves of symmetries in the structure of the agent’s environment?”, and if they do, bam, sufficient condition for power-seeking under the featurized model. I think there’s something important here about how good world-model-featurizations should work, but I’m not sure what that is yet.

I do know that “the featurization should commute with the environmental symmetry” is something I’d thought—in basically those words—no fewer than 3 times, as early as summer2021, without explicitly knowing what that should even mean. ⤴